There Are No Silos

Your Lack of Privacy and the Illusion of Discrete Information

I was having a conversation with a friend about our privacy, or rather, about how much of it is clearly gone. In broad terms, it was ultimately about how much information companies have on us, how interconnected data is as an industry, etc.

The next day, I made an offhand, very left-field reference to the movie Death to Smoochy in my Discord server. I didn’t look it up. I didn’t see it anywhere. It is not having a moment. I just mentioned it in passing.

Later that evening, I opened YouTube on my television. The full movie was sitting at the top of my recommendations. Google/Alphabet does not own Discord, and I didn’t type this into my phone where Google Keyboard could tell them about it. This was full-on desktop typing.

Possible explanations include cross-device tracking, account linkage, data brokers combining signals, or “coincidence” amplified by recommendation systems clustering interests. I don’t honestly care much which is responsible.

The thing I’m interested in is how seamlessly two discrete platforms and activities can utilise my profile. Because if that’s how it works, everyone who wants my private information already has it (yours, too).

Fully Integrated

It’s easy to imagine private information as many pieces stored (and thus acquired) discreetly. Company A has one piece. Company B has another. Maybe Company C has something else entirely. The picture that forms is one of separate vaults, each holding a fragment.

But entire industries exist to connect data. Data brokers, ad exchanges, identity-resolution services, and fraud-detection networks focus on linking identifiers across systems. They continuously hash email addresses, fingerprint devices, correlate addresses, and stitch fragments of a person’s profile together.

As such, the question isn’t “who has my data?” but “who doesn’t?"

Once a fragment of you enters that ecosystem at any point, it doesn’t stay local to that point. It immediately travels everywhere it needs to. If Company A learns something about me and Company B learns something else, those pieces aren’t isolated; they’re integrated within a market designed to reconcile them. No single company needs to collect everything, or even more than one thing.

A few decades ago, this would have been scoffed at as conspiracy talk. And in a sense, it is a conspiracy of coordinated economic interests. Hardly a spy thriller, but that’s what it is.

The moments people tend to worry about (the request for verification, the prompt to confirm identity, the formal acknowledgment of who you are) stop looking like moments of discovery. The motive isn’t to get more information from you if they already have it (and they do).

Instead, it’s about stabilizing the relationship between you and the system.

Process

Confirming a discreet piece of info doesn't make a company recognize you; it already knows who you are. The data is already there. Thus, the request doesn’t transform the data. It transforms you.

Before formalization, we can still ask, “How do they know that?” We can still imagine the pieces as separate. And in this situation, one may have a moment where the system’s awareness feels uncanny. For instance, if I hadn’t already learned to stop worrying and love the bomb, I would have found the Death To Smoochy recommendation fuckin’ crazy, maaaaaaaaaaan. This is a type of friction that could interrupt “autopilot” activity (the best kind of activity for companies that don’t want scrutiny).

Formalization smooths that over. I laughed at the algorithm! When the system “confirms your identity,” what really happens is that it turns something uncanny into something procedural. It moves from “Why is this happening?” to “This is how it works.” The relationship becomes explicit.

And if we’re serious, this only prevents a minor break in immersion anyway. A single moment of friction rarely significantly alters behavior. Most people continue, and that’s what really matters. Even if we refuse in that moment, the system doesn’t meaningfully change, and neither does what the system knows about us. Again, if one of them knows it, functionally, they all do.

Withholding information from one company doesn’t unwind the network that has already been built. If one participant doesn’t get a fragment, another already has something close enough.

None of this hinges on a single upload, checkbox, or field. It runs on participation at scale. And that participation has been slowly normalized over the course of decades.

Basically, I am trying to caution against confusing local refusal with structural refusal.

Misplaced Focus

I regularly see people all over the internet getting pulled into arguments about an individual company or, even more commonly, what to do in an individual moment: this platform asked me to verify that the service wants me to upload something, this app is requesting information it didn’t use to. Those moments feel like the battleground because they’re when the system becomes legible in one’s everyday experience.

But those moments are endpoints, making them a “fork” only to the extent that any other end-user decision is (read: not much of one).

The real mechanism is the set of intermediaries and standards whose job is to link information across contexts; an industry with products, contracts, and documentation.

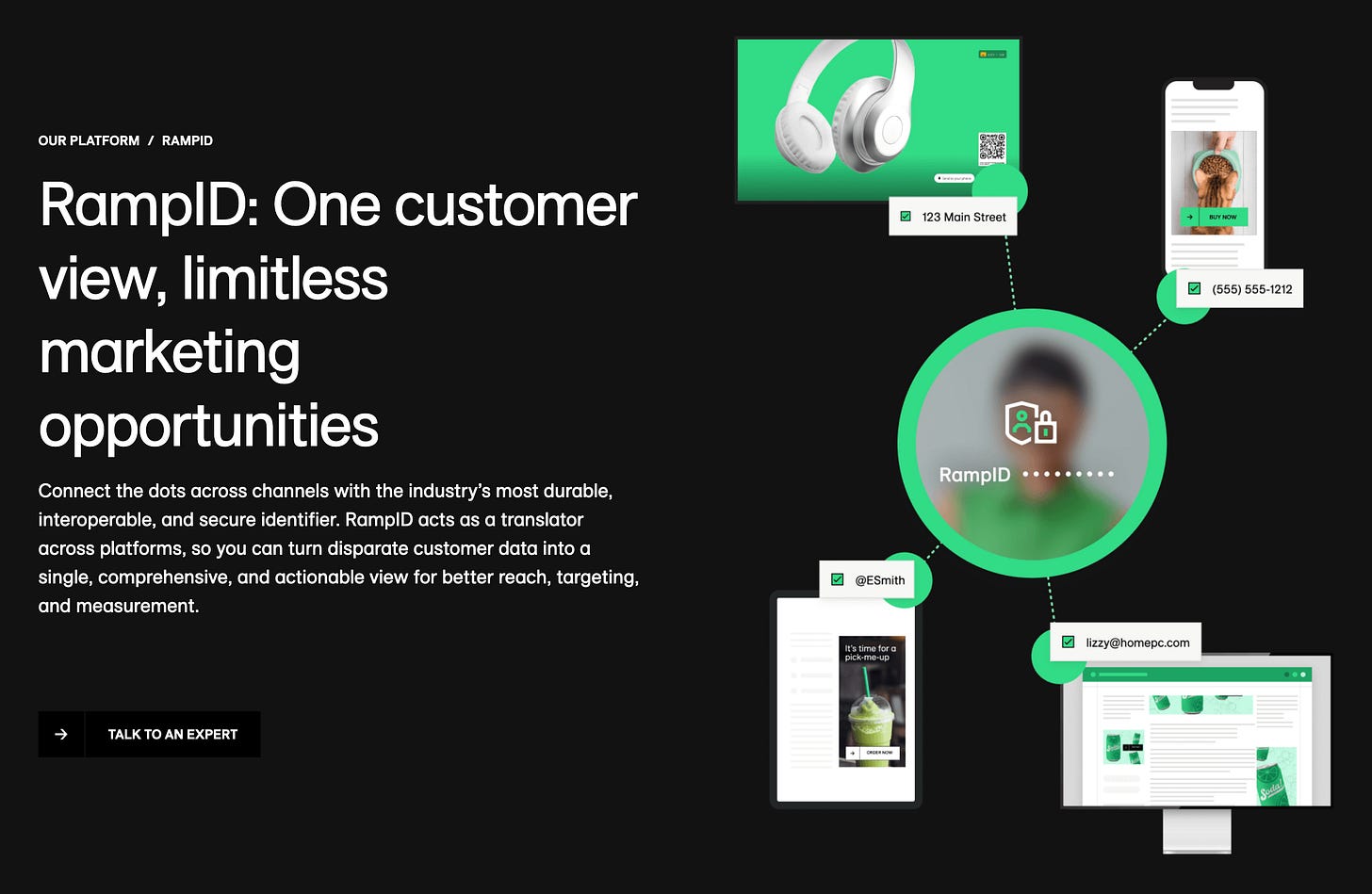

Systems like LiveRamp’s RampID explicitly link “offline” identity data with online identifiers so that information can be processed and matched across databases, companies, browsers, and devices tied to the same persistent identifier. That isn’t paranoia; it’s in the product documentation.

Hell, it’s in their pretty damn bold marketing copy! They correctly assume the everyday consumer isn’t going to see their website; I wouldn’t have had I not decided to write this piece. Despite knowing all of this happens, it is still pretty jarring to go on their website and have them so glibly declare “privacy is over” in so many words.

But it is over, at least as long as our current system exists. Sure, one can probably live a relatively private life in terms of other people around them, but in terms of one’s interaction with the outside world through literally any mediating platform? Absolutely not.

Not that people are foolish for reacting to a prompt for information; they’re the only part we’re supposed to see. But they’re not where the profile is built, and they’re not where the profile travels.

This is where the notion of “privacy hygiene” can come into play, blaming the consumer for capital’s sins. While caution and limiting what you share are understandable, framing structural integration mainly as personal cleanliness or discipline shifts responsibility away from broader systems. It becomes easier to see exposure as a personal failure to control rather than a consequence of the infrastructure’s design.

We’ve seen this plenty, with “media literacy” often presented as the solution to platforms and algorithms whose incentive structures amplify outrage and/or misinformation by design. Adjacent: “Vote with your dollars” is offered as the solution to supply chains and labor regimes that no individual purchasing decision can meaningfully restructure.

In each case, the burden shifts to the user; the architecture remains intact, while individuals are told to #DoBetter within it. But no choice given to us is designed to affect the capabilities of those who give us that choice. We do not have control, and that is a scary thought.

Grant me the serenity to accept the things I cannot change, courage to change the things I can, and wisdom to know the difference.

A lot of what I write about is the lack of control we have as modern citizens, and one could interpret this as a form of surrender. But I implore people to understand that I am not asking them simply to accept that they cannot change certain things, but to develop the ability to recognize what they cannot change and to stop expending effort on those things.

Explicitly, I must repeat that neither I nor any other individual can offer you a solution; we can only describe what we observe.

Change can only happen when conditions and consciousness align.

Conclusion

I am an optimistic person. But optimism isn’t magical thinking; realistic optimism requires understanding the scale of the thing you’re dealing with. It requires identifying where power actually sits, which is 99.9~% of the time not where it is visible to the everyday prole. I feel the greatest sense of defeat when I see people treat a consumer-level decision as The Problem™—and a simple action, like switching apps or changing a setting (or, to be frank, protesting), as its solution.

I don’t begin to feel optimistic until I see people correctly locating the levers that are intentionally kept hidden. Not because seeing them immediately dismantles anything, but because clarity precedes leverage.

We can’t meaningfully intervene in a system we’re misidentifying. In fact, this is ultimately what I spend time on with these various issues: we need to get to the bottom of things before anything will change meaningfully.

Once that’s understood, at least we’re arguing about the right thing.