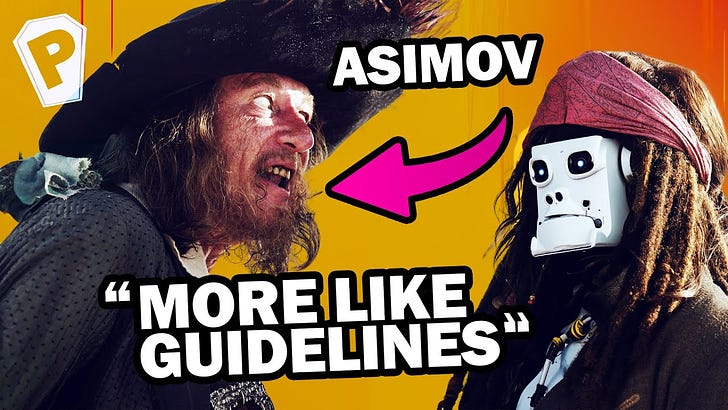

Isaac Asimov's Laws of Robotics are just Capitalist Propaganda

There’s a significantly less professional-sounding (but more fun) version of this post as a video.

The Three Laws of Robotics, as formulated by Isaac Asimov, are:

A robot may not injure a human being or, through inaction, allow a human being to come to harm.

A robot must obey the orders given to it by human beings, except where such orders would conflict with the First Law.

A robot must protect its own existence as long as such protection does not conflict with the First or Second Law.

These laws sound neutral and well-considered. There are fears about “intelligent” robots that these laws seek to prevent, but our fears are the best way to take advantage of us.

According to Marx, ideology is a system of ideas, beliefs, and values that reflects and serves the interests of the dominant class, obscuring the realities of class struggle and perpetuating existing power relations. While I am not alleging Asimov intended to create ideology in this sense (as with Hbomberguy’s “let’s not talk about systems,” the intent doesn’t really matter if the ideology directs people away from discussing the rulers’ power) that is exactly what he did.

Protection of Humans

Harm is not objective; it is subjective. Further, what prevents harm to one group may harm another — and I would argue that “what's good for the goose may not be good for the gander” is probably best applied along class lines (especially given that in this situation, the robots are “the means”).

The First Law cannot ensure the protection of all humans due to the inherent contradictions and conflicts of interest within capitalist society. Since harm is subjective (and those in power often determine definitions), the law is incapable of providing universal protection. Instead, it is likely to reflect and reinforce the priorities and interests of the capitalist class at the expense of the subordinate class and/or specific groups within it.

For example, in a scenario where a factory is polluting the air quality of a nearby community, a robot working in the factory “programmed to prevent harm” would likely prioritize the interests of the factory owners over the health and well-being of the community members affected by the pollution. After all, it is most likely owned and controlled by the factory proprietor. Simply saying “do not harm” doesn’t reconcile these conflicting interests and definitions of harm.

Obedience to Humans

The Second Law requires robots to obey orders given by humans, except when such orders conflict with the First Law. If we are truly interrogating these ideas, we must raise questions about who has the authority to give orders and how this is determined.

In a capitalist system, those who own the means of production (again, which robots are) typically hold the power to issue orders. As a result, the Second Law can reinforce existing power dynamics by ensuring that robots serve the interests of the capitalist class. For example, robots could be ordered to enforce some specific discipline, monitor and surveil workers, or replace human labor altogether, leading to job loss and increased exploitation.

The obedience mandated by the Second Law and the lack of class distinctions more or less ensures the owning class would be who is obeyed.

Self-Preservation

The Third Law, when considered in the context of the first two, essentally creates the potential for robots to act as a de facto military or enforcement mechanism for the owning class. The owners, who have the power to program the robots, get to define what constitutes harm, what preventing harm entails, and what orders to give. In this scenario, robots are not just property; they are the means of production, and their ownership equates to power.

This is less in the explicit and more in the implicit. Despite appearing to prioritize self-preservation, the law stipulates that this is secondary to the first two laws, which, as we've discussed, are heavily influenced by class interests. Robots, devoid of self-preservation instincts and operating under a program deployed by their owners, are essentially mandated to prevent harm as defined by their owners, even to the extent of their own destruction.

This means that in situations deemed as “harmful” by the owning class, robots are expected to act even if the situation could result in their damage or destruction, effectively becoming willing combatants.

Conclusion

The Three Laws of Robotics, while seemingly benign in their quest for human safety and robotic obedience, subtly encode and perpetuate the class dynamics of capitalist society.

By prioritizing human-defined concepts of “harm prevention” and obedience, the laws inadvertently transform robots into instruments of the owning class, capable of reinforcing existing power structures and inequalities. These laws, in their current form, fail to address the inherent contradictions of capitalism and the subjective nature of harm, ultimately serving the interests of the dominant class at the expense of the broader society.

In this light, the Three Laws reveal themselves not as neutral guidelines for robotic behavior but as ideological tools. Propaganda.